Each article is scored across ten dimensions.

The criteria stays in the article — no outside research, no source judgment.

Looking for outside research to supplement our metrics? Our Ask Clear-Sight module is built to deliver lateral reading context and sources to any article.

Triangulation in every metric.

Every metric delivers three layers of validation — a quantitative score, a qualitative explanation of why the article earned that score, and direct evidence pulled from the text. No black boxes. No unexplained numbers. The score tells you where the article lands. The explanation tells you why. The evidence lets you verify it yourself.

Balance

Does the article present the subject fairly or does it favor one perspective?

Balance measures the structural choices an article makes — how it frames the argument, whose voice gets space, and whether the reasoning is sound. It is not about word count. It is about whether the construction of the story gives the reader an honest view of the landscape.

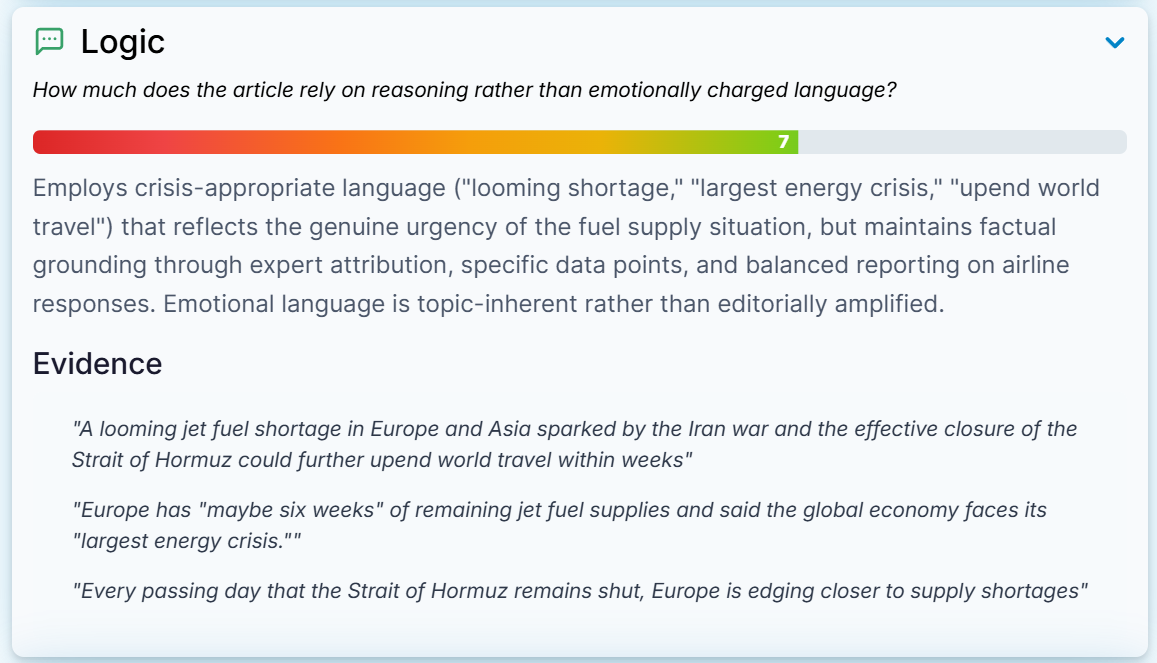

Logic

Does the article inform or does it activate?

Logic measures the emotional intensity of the language used and the effect it is designed to have on the reader. A high score means the article delivers information in a measured tone. A low score means emotional language is doing more work than the facts are.

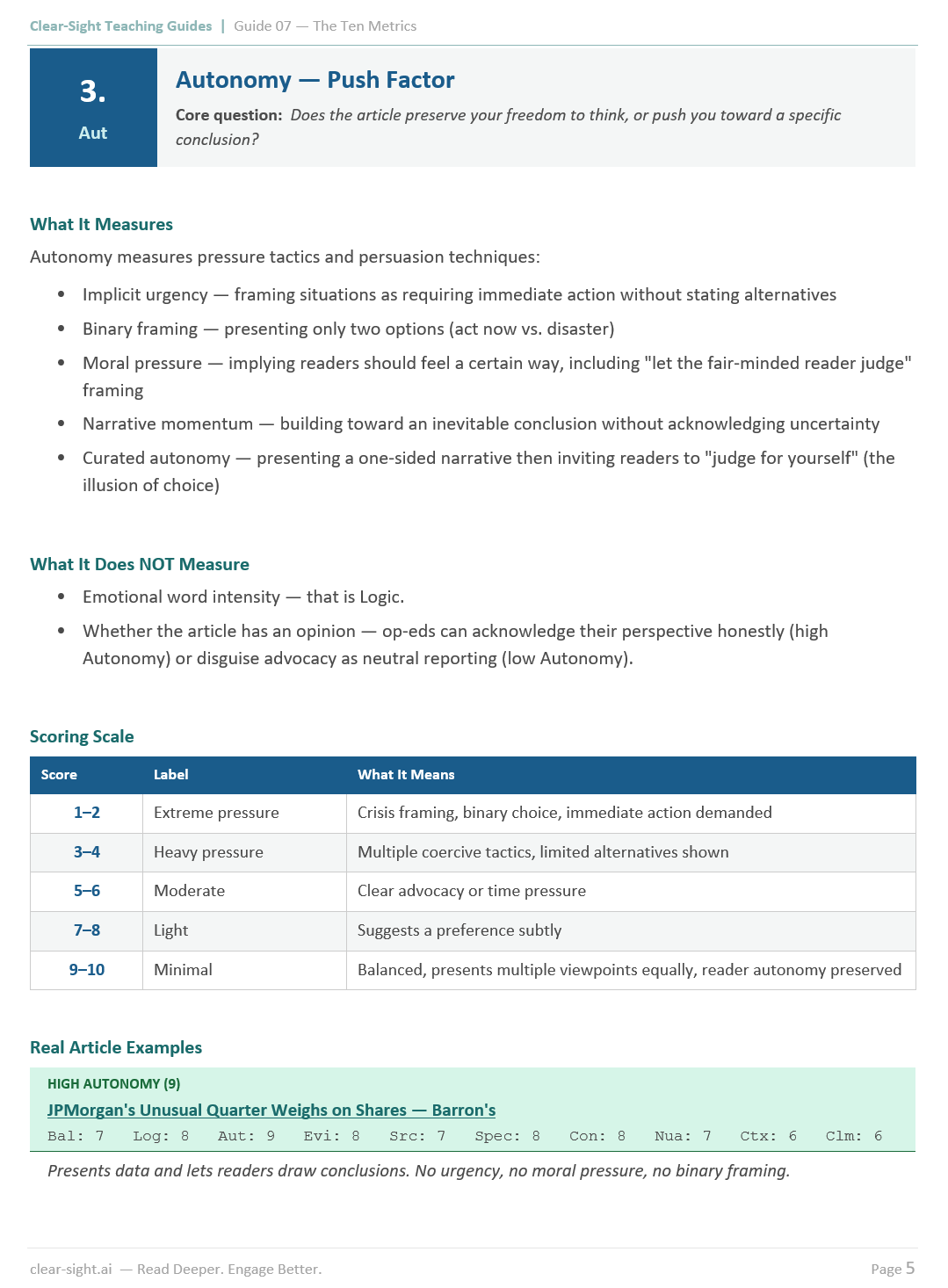

Autonomy

Does the article let you reach your own conclusion?

Autonomy measures the pressure an article applies to steer the reader toward a specific outcome. A high score means the article trusts you to decide. A low score means the structure of the story is designed to leave you with only one acceptable conclusion.

Evidence

How much of this article is verifiable fact versus opinion or assertion?

Evidence measures the ratio of checkable, concrete facts to unsubstantiated claims. It does not evaluate whether those facts are correct — it measures how much of the article's weight is carried by verifiable information versus stated opinion.

Sourcing

Who is speaking in this article and how transparent is their role?

Sourcing measures the quality, diversity, and transparency of the voices used to build the story. A high score means sources are named, credible, and represent a range of relevant perspectives. A low score means the article leans on anonymous sources, a single voice, or sources whose interests are not disclosed.

Specificity

How precise is the language?

Specificity measures whether the article uses concrete, verifiable detail or relies on vague language that sounds authoritative but resists scrutiny. Precision is a marker of rigor. Vagueness is often a marker of something else.

Consistency

Does the article hold together?

Consistency measures whether the article's claims, framing, and tone remain coherent from beginning to end. A high score means the story does not contradict itself. A low score means the article shifts in ways that undermine its own argument or presents information that does not align across sections.

Nuance

Does the article treat its subject with the complexity it deserves?

Nuance measures whether the story acknowledges competing interests, legitimate uncertainty, and the inherent difficulty of most important issues — or whether it reduces them to something simpler than they actually are.

Context

Does the article give you what you need to understand the story?

Context measures whether sufficient background, history, and surrounding circumstances are provided for the reader to fully evaluate what they are reading — or whether the story assumes knowledge the reader may not have.

Claims

Are the numbers and statistics in this article used honestly?

Claims measures how the article handles quantitative information — whether data is presented with appropriate framing, whether statistics are contextualized, and whether numbers are used to illuminate or to persuade. Clear-Sight does not fact-check claims against outside sources. It evaluates how claims are constructed and used within the article itself.